Contents

Camel.Index

CamelIndex is an abstract class for implementing content indexing. It is used by the local mail storage backend to perform content indexing.

It is actually a collection of classes, an index driver, a name lookup, and a cursor class. All of the interfaces are pretty straightforward; consult the source-code for information.

Camel.TextIndex

CamelTextIndex is an implementation of the CamelIndex classes. It uses a CamelPartitionTable and CamelKeyTable to implement a reverse content index. Data is stored in a CamelBlockFile and CamelKeyFile.

Each index consists of 2 hash tables stored in a single Evolution/#Camel.BlockFile, and a list of lists in a [[Evolution/#Camel.KeyFile]. Each hash table is a combination of a Evolution/#Camel.KeyTable and a Evolution/#Camel.PartitionTable, which combine to create a bi-directional hash table (value to id, id to value), with one or two disk accesses required to resolve the answer in each direction.

Camel.KeyFile

A CamelKeyFile is a file which is only ever appended to, and consists of multiple linked lists of camel_key_t indices - which are 32 bit integers. This file is not useful without an external index to say where each list starts, and something to interpret the indices stored in the file.

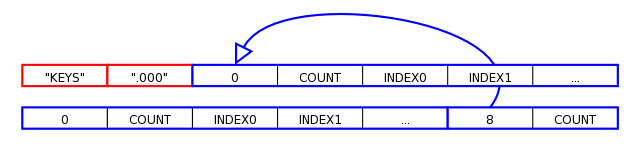

Each file consists of an 8 bit version header - "KEYS.000", followed by the records in a linear fashion.

Each record consists of a parent index, a count, and then count hash indices. The parent index is just the offset in the file for the record. This way, multiple linked lists of records can be built up by only appending to the file. To read back the list, you start at the record root pointer, and then read backwards in the file until you get a ZERO parent pointer.

This diagram shows the start of an example CamelKeyFile. The first-written records are the tails of any linked lists in the file.

Records cannot be removed from a CamelKeyFile (apart from dropping list tails), instead a single-pass process is used in conjuction with the indices to remove deleted entries.

In addition to the basic storage of the data, there is a global file cache used internally so that many CamelKeyFile objects can be opened by the client, without having to keep track of excessive resource usage. This is because one file descriptor is used to access the file, and there may be hundreds of indexes being accessed in a given application instance.

Camel.BlockFile

A CamelBlockFile is a file and interface used to manage block-based access to structured information.

- A block-level cache.

- Manages flushing blocks to disk and loading them from disk.

- Keeps track of the sync'd state of the file.

- Managed block allocation and freeing.

It is used by the CamelPartitionTable class to implement an extensible disk-based hashtable, any number of which can exist in a given CamelBlockFile. Each block can be loaded by block id, which is just the offset of the block in the file. The block size must be a power of two, which allows the code using it to use the lower order bits to store other information.

Like CamelKeyFile, it also has a resource cache to ensure file descriptors are not over-used.

This is probably the primary reason the indexing implementation might be considered slow, as writing randomly to a large file is a heavy i/o load - although having a separate index for every mailbox probably doesn't help.

And yes, it was tried using memory mapping instead, with no appreciable performance improvement.

Camel.KeyTable

A CamelKeyTable is used to assign a unique identifier to a key value (i.e. a word).

This unique identifier will be persistent throughout the life of the KeyTable, and can be converted back into the word using at most 1 disk access. Up to 22 flag bits can also be stored along with the key name, which is used by CamelTextIndex to track which names have been removed from an index.

It can also be used to iterate through all keys in the file, also providing the unique identifier and flags for each key entry. This can be used for instance to implement partial word searches, by applying the search to each unique word - which is a much smaller data-set than the raw content.

It uses a CamelBlockFile backing to manage disk i/o, and so that multiple key tables may exist in a given physical file.

Currently, key files are only ever appended to; to remove unused keys requires a one-pass re-write process.

Root block

The root block is used to track the extent of the list. The root block just has indices for the first block in the keyfile, and the last used block, so that additions to the key table are efficient.

struct _CamelKeyRootBlock {

camel_block_t first;

camel_block_t last;

camel_key_t free; /* free list */

};free is currently unused; allocation is managed by the underlying CamelBlockFile.

Data blocks

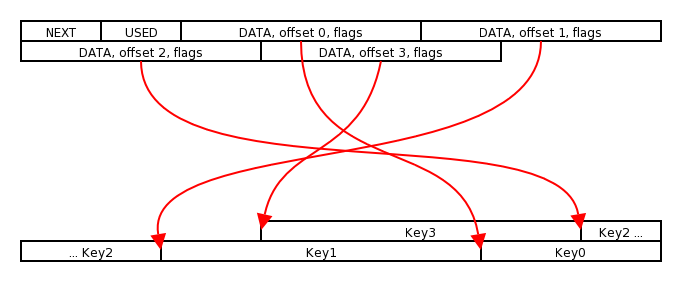

The key data block itself is relatively simple, but leads to fairly complex code.

struct _CamelKeyKey {

camel_block_t data;

unsigned int offset:10;

unsigned int flags:22;

};

struct _CamelKeyBlock {

camel_block_t next;

guint32 used;

union {

struct _CamelKeyKey keys[(CAMEL_BLOCK_SIZE-8)/sizeof(struct _CamelKeyKey)];

char keydata[CAMEL_BLOCK_SIZE-8];

} u;

};Apart from a next pointer, it has a variable-length array of key words packed into the fixed-sized block. It achieves this by having a fixed-record sized portion packed at the start of the block, and the variable-record sized data packed at the end of the data block, in reverse order.

The offset field is a byte offset from the start of the key data block where the key starts. If it is the first key, this is bounded by the block size, otherwise it is bounded by the previous key's start offset. data and flags are free for use by the code calling it - CamelTextIndex uses these for the CamelPartitionTable index and status flags respectively.

The key words are stored completely packed, with no terminating NUL character.

The key id's created consist of two parts. First, the CamelBlockFile block id which is the upper bits of the block number, and the lower bits are the index of the key entry in the array above. Given the way the camel_block_t is implemented, a simple masking process extracts out each component.

So to convert a camel_key_t into a keyword, it is a simple matter of masking out the key index, and loading the data block. Then using the index to look up the key record, together with the surrounding record's offset value, and copying the key value from the record into a string.

Camel.PartitionTable

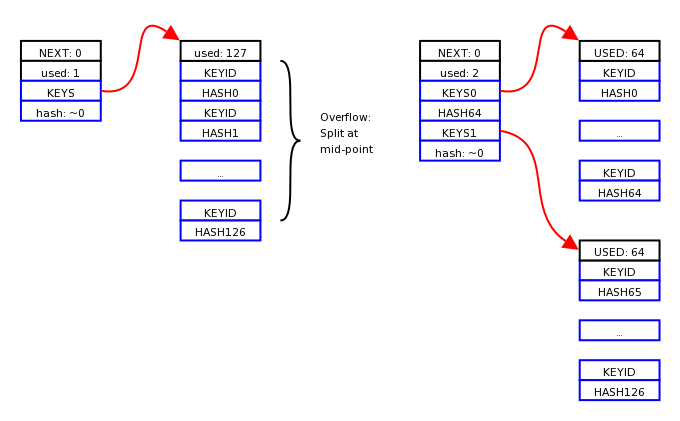

This is an on-disk extensible hash table. It consists of a linear index of blocks called partitions which only contain hash values and pointers to key blocks. These are used to perform a range-search of available blocks based on the hash value. The key blocks only contain hash values and key identifiers - the keys themselves are not stored in them, allowing much greater packing density.

Using a block size of 1024 bytes, this allows for 127 keys per key block, and 16129 per partition block, or at least half that, 8064, assuming worst-case packing. So a key lookup in a typically sized dictionary of 50 000 words will only a scan of 3-6 partitions, and a binary search of a single key block.

This diagram shows what happens when a partition table over-flows. It is split in the middle, and all keys after the the mid-point are moved to a new block (for simplicify the diagram doesn't show where the new key is inserted).

Note that currently hash collisions are not catered for at all; the first entry in the hash will be the one used. With a hash space of 4 billion and a typical key space of 50 000-100 000 entries collisions should be sufficiently rare to be insignificant for our purposes. If it were to be a problem there are various ways around this, the simplest is probably to compare key strings and then re-hash - although this requires that the partition table keep the keys as strings. Another mechanism is to make each partition a radix table, and additional levels for more hash bits until a unique hash is achieved.

Partition blocks

Partition blocks are basically range-search nodes used to easily locate a given key block based on the hash value of the key.

All partition blocks are kept in memory, so a key lookup will always take 1 disk access at most.

struct _CamelPartitionMap {

camel_hash_t hashid;

camel_block_t blockid;

};

struct _CamelPartitionMapBlock {

camel_block_t next;

guint32 used;

struct _CamelPartitionMap partition[(CAMEL_BLOCK_SIZE-8)/sizeof(struct _CamelPartitionMap)];

};Each partition block (CamelPartitionMapBlock infact) is just an array of hash values plus a block pointer to the partition key block for this hash. These are stored in sorted order, keyed on he hashid. The hashid is a boundary marker - all key values in this block are guaranteed to be lower than the hashid.

The partition table is primed by creating a single CamlePartitionMapBlock block which contains a single CamelPartitionMap entry whos hash value is ~0.

Key Blocks

Key data is stored in the key blocks, sorted by the hash value of the key.

struct _CamelPartitionKey {

camel_hash_t hashid;

camel_key_t keyid;

};

struct _CamelPartitionKeyBlock {

guint32 used;

struct _CamelPartitionKey keys[(CAMEL_BLOCK_SIZE-4)/sizeof(struct _CamelPartitionKey)];

};Each key block just contains a key information pointer and the hash id for this key. In this instance the keyid will be a camel_key_t generated by the corresponding CamelKeyTable for this hash.

Normally in a partition table, the key blocks would contain the key strings themselves; but in camel all key strings are stored in the CamelKeyTables instead. This is so that each key can have have a static block allocation for the life of the index - this allows the reverse index, of converting an id into a key, to be created with no extra overhead.

Indexing process

The indexing process consists of associating words with named objects.

# First, a name is created for the object in question. # Then the content of the object is dumped to the name indexer, a buffer at a time. # The processor splits the words in the buffer up, generarating a table of all unique words present. #* Punctuation is dropped and spaces separate words. # Each word in turn is looked up in a word index global to the given index.

# If the word's list reaches a limit:

Because of the potentially vast number of small updates required, large amounts of caching is required to get any sort of performance. The code uses a combination of snooping of the sync method and dynamic cache control in the CamelBlockFile implementation to manage it.

Lookup process

The lookup process is straightforward, iteratable, and relatively cheap.

# The word is looked up in the word partition table, retrieving the word id. # The key table entry is loaded for the word id, retrieving the key file head. # The key file is iterated through, retrieving the nameid of each object which contains this word.

Caching is of little use in this case; the caching is limited greatly in this read-onl mode to save memory.

Subword search

Performing a sub-word search is a little different, but is a subset of the lookup loop above.

# Iterate over all words using the word key table. # Perform a substring match on the word. # If the word matches, then perform steps 2-4 above.

I think currently duplicates are all passed out together, requiring an external process to separate them out.

History and Thoughts

Indexing in Camel was one of the early selling points of Evolution. Evolution has always had lightning fast body content searching because of this indexing feature. It has however, always been problematic code.

libibex

Originally the indexing was based on a (n internal) library called libibex. This indexer was purely memory based, only provided exact word matches and had its own performance problems.

Updates and building a completely new index was relatively fast - starting from an empty index, adding all the words purely in memory, and then writing out the result in one go. Incremental updates become progressively slower - even a single update requires either a load of the entire index or it already to be in memory.

And having multiple indexes in memory, one for each folder, each with their own copy of much the same content, added to the inefficiencies.

libdb based index

To try to get around this problem, of slow incremental update, an attempt was made to use libdb as the storage mechanism for the table. Indexing just one message was of a relatively fixed cost - that is, after thousands of messages, adding just one new one would be faster than using libibex. The problem is that the fast case is no faster, and a bi-directional index must be created.

The result was very slow and very bulky on disk.

CamelIndex

This is when CamelIndex was written. After a few iterations it managed to become relatively performant - in the same order as libibex for building indices from scratch, but much faster at incremental update. The index files are even relatively compact.

It still isn't perfect - it is quite a memory hog when building an index.

It also has some stability issues. There are long-standing bugs which cannot be recreated reliably and could even be from totally unrelated code. And with no fine-grained consistency control, any failure requires a full rebuild of the index.

Again, one fairly major overhead (memory + performance) is that each folder gets its own isolated index, and thus has to copy all of the word items. Given that a typical word vocabulary will consist of 20 000 or more words (particularly if indexing non-language text, like source code patches), duplicating this for each folder is a big overhead. The problem with merging all indices into one however is that any failure would require even more work to rebuild. Perhaps some mixture of files could be created.

What to do?

Remove this feature? One of the original reasons for this code was to allow vFolders to operate quickly. The Evolution/Camel Disksummary Branch implements vFolders in a very different way, where repeated searches of the same data is never performed; so there is some argument for removing the feature. Instead of a general word index, it creates only tailored indices required for the vFolders. And the other use, immediate searching, is starting to be covered by desktop search applications which use their own indices.

Another option is to use another indexing library - there exists some now that didn't exist before. Although almost all of them are designed for batch update, and either run slower or use more memory.

The logic above the index object could do more complex things anyway, e.g. batch index new data independent of lookups, and if data is not indexed, then either index it or perform a manual search in the mean time.